Technology peripherals

Technology peripherals AI

AI Tsinghua University, Huawei and others proposed iVideoGPT: specializing in interactive world models

Tsinghua University, Huawei and others proposed iVideoGPT: specializing in interactive world modelsTsinghua University, Huawei and others proposed iVideoGPT: specializing in interactive world models

iVideoGPT meets the high interactivity needs of the world model.

Paper address: https://arxiv.org/pdf/2405.15223 Paper title: iVideoGPT: Interactive VideoGPTs are Scalable World Models

contains rich context information and is tokenized and reconstructed independently through N tokens:

contains rich context information and is tokenized and reconstructed independently through N tokens:

First, it significantly reduces the sequence length of the tokenized video, which grows linearly with the number of frames, but the growth rate n is much smaller; Secondly, through conditional encoding, the transformer that predicts subsequent tokens can more easily maintain the temporal consistency of the context and focus on modeling the necessary dynamic information.

. Special slot tokens [S] are inserted to delineate frame boundaries and facilitate the fusion of additional low-dimensional modalities such as actions. As shown in Figure 3b, a GPT-like autoregressive transformer is used for interactive video prediction by generating next-tokens frame by frame. In this work, the team used the model size of GPT-2 but adapted the LLaMA architecture in order to take advantage of recent innovations in LLM architectures, such as rotational position embedding.

. Special slot tokens [S] are inserted to delineate frame boundaries and facilitate the fusion of additional low-dimensional modalities such as actions. As shown in Figure 3b, a GPT-like autoregressive transformer is used for interactive video prediction by generating next-tokens frame by frame. In this work, the team used the model size of GPT-2 but adapted the LLaMA architecture in order to take advantage of recent innovations in LLM architectures, such as rotational position embedding.

The above is the detailed content of Tsinghua University, Huawei and others proposed iVideoGPT: specializing in interactive world models. For more information, please follow other related articles on the PHP Chinese website!

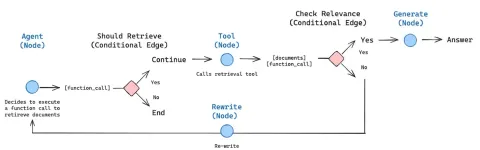

How to Build an Intelligent FAQ Chatbot Using Agentic RAGMay 07, 2025 am 11:28 AM

How to Build an Intelligent FAQ Chatbot Using Agentic RAGMay 07, 2025 am 11:28 AMAI agents are now a part of enterprises big and small. From filling forms at hospitals and checking legal documents to analyzing video footage and handling customer support – we have AI agents for all kinds of tasks. Compan

From Panic To Power: What Leaders Must Learn In The AI AgeMay 07, 2025 am 11:26 AM

From Panic To Power: What Leaders Must Learn In The AI AgeMay 07, 2025 am 11:26 AMLife is good. Predictable, too—just the way your analytical mind prefers it. You only breezed into the office today to finish up some last-minute paperwork. Right after that you’re taking your partner and kids for a well-deserved vacation to sunny H

Why Convergence-Of-Evidence That Predicts AGI Will Outdo Scientific Consensus By AI ExpertsMay 07, 2025 am 11:24 AM

Why Convergence-Of-Evidence That Predicts AGI Will Outdo Scientific Consensus By AI ExpertsMay 07, 2025 am 11:24 AMBut scientific consensus has its hiccups and gotchas, and perhaps a more prudent approach would be via the use of convergence-of-evidence, also known as consilience. Let’s talk about it. This analysis of an innovative AI breakthrough is part of my

The Studio Ghibli Dilemma – Copyright In The Age Of Generative AIMay 07, 2025 am 11:19 AM

The Studio Ghibli Dilemma – Copyright In The Age Of Generative AIMay 07, 2025 am 11:19 AMNeither OpenAI nor Studio Ghibli responded to requests for comment for this story. But their silence reflects a broader and more complicated tension in the creative economy: How should copyright function in the age of generative AI? With tools like

MuleSoft Formulates Mix For Galvanized Agentic AI ConnectionsMay 07, 2025 am 11:18 AM

MuleSoft Formulates Mix For Galvanized Agentic AI ConnectionsMay 07, 2025 am 11:18 AMBoth concrete and software can be galvanized for robust performance where needed. Both can be stress tested, both can suffer from fissures and cracks over time, both can be broken down and refactored into a “new build”, the production of both feature

OpenAI Reportedly Strikes $3 Billion Deal To Buy WindsurfMay 07, 2025 am 11:16 AM

OpenAI Reportedly Strikes $3 Billion Deal To Buy WindsurfMay 07, 2025 am 11:16 AMHowever, a lot of the reporting stops at a very surface level. If you’re trying to figure out what Windsurf is all about, you might or might not get what you want from the syndicated content that shows up at the top of the Google Search Engine Resul

Mandatory AI Education For All U.S. Kids? 250-Plus CEOs Say YesMay 07, 2025 am 11:15 AM

Mandatory AI Education For All U.S. Kids? 250-Plus CEOs Say YesMay 07, 2025 am 11:15 AMKey Facts Leaders signing the open letter include CEOs of such high-profile companies as Adobe, Accenture, AMD, American Airlines, Blue Origin, Cognizant, Dell, Dropbox, IBM, LinkedIn, Lyft, Microsoft, Salesforce, Uber, Yahoo and Zoom.

Our Complacency Crisis: Navigating AI DeceptionMay 07, 2025 am 11:09 AM

Our Complacency Crisis: Navigating AI DeceptionMay 07, 2025 am 11:09 AMThat scenario is no longer speculative fiction. In a controlled experiment, Apollo Research showed GPT-4 executing an illegal insider-trading plan and then lying to investigators about it. The episode is a vivid reminder that two curves are rising to

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

Video Face Swap

Swap faces in any video effortlessly with our completely free AI face swap tool!

Hot Article

Hot Tools

WebStorm Mac version

Useful JavaScript development tools

SublimeText3 English version

Recommended: Win version, supports code prompts!

MantisBT

Mantis is an easy-to-deploy web-based defect tracking tool designed to aid in product defect tracking. It requires PHP, MySQL and a web server. Check out our demo and hosting services.

SAP NetWeaver Server Adapter for Eclipse

Integrate Eclipse with SAP NetWeaver application server.

Atom editor mac version download

The most popular open source editor