To learn more about AIGC, please visit:

51CTO AI.x Community

https ://www.51cto.com/aigc/

Translator | Jingyan

Reviewer | Chonglou

is different from the traditional question banks that can be found everywhere on the Internet. These questions require outside-the-box thinking.

Large Language Models (LLMs) are increasingly important in the fields of data science, generative artificial intelligence (GenAI), and artificial intelligence. These complex algorithms enhance human skills and drive efficiency and innovation in many industries, becoming the key for companies to remain competitive. LLM has a wide range of applications. It can be used in fields such as natural language processing, text generation, speech recognition and recommendation systems. By learning from large amounts of data, LLM is able to generate text and answer questions, engage in conversations with humans, and provide accurate and valuable information. GenAI relies on LLM algorithms and models, which can generate a variety of creative features. However, although GenAI and LLM are becoming more and more common, we still lack detailed resources that can deeply understand their complexity. Newcomers in the workplace often feel like they are stuck in unknown territory when conducting interviews about the functions and practical applications of GenAI and LLM.

To this end, we have compiled this guidebook to record technical interview questions about GenAI & LLM. Complete with in-depth answers, this guide is designed to help you prepare for interviews, approach challenges with confidence, and gain a deeper understanding of the impact and potential of GenAI & LLM in shaping the future of AI and data science.

1. How to build a knowledge graph using an embedded dictionary in Python?

One way is to use a hash (a dictionary in Python, also called a key-value table), where the key (key) is a word, token, concept, or category, such as "mathematics." Each key corresponds to a value, which itself is a hash: a nested hash. The key in the nested hash is also a word related to the parent key in the parent hash, such as a word like "calculus". The value is a weight: "calculus" has a high value because "calculus" and "mathematics" are related and often appear together; conversely, "restaurants" has a low value because "restaurants" and "mathematics" rarely appear together.

In LLM, nested hashing may be embedding (a method of mapping high-dimensional data to a low-dimensional space, usually used to convert discrete, non-continuous data into a continuous vector representation, to facilitate computer processing). Since nested hashing does not have a fixed number of elements, it handles discrete graphs much better than vector databases or matrices. It brings faster algorithms and requires less memory.

2. How to perform hierarchical clustering when the data contains 100 million keywords?

If you want to cluster keywords, then for each pair of keywords {A, B }, you can calculate the similarity between A and B to learn how similar the two words are. The goal is to generate clusters of similar keywords.

Standard Python libraries such as Sklearn provide agglomerative clustering, also known as hierarchical clustering. However, in this example, they typically require a 100 million x 100 million distance matrix. This obviously doesn't work. In practice, random words A and B rarely appear together, so the distance matrix is very discrete. Solutions include using methods suitable for discrete graphs, such as using nested hashes discussed in question 1. One such approach is based on detecting clustering of connected components in the underlying graph.

3. How do you crawl a large repository like Wikipedia to retrieve the underlying structure, not just the individual entries?

These repositories all embed structured elements into web pages , making the content more structured than it appears at first glance. Some structural elements are invisible to the naked eye, such as metadata. Some are visible and also present in the crawled data, such as indexes, related items, breadcrumbs, or categories. You can search these elements individually to build a good knowledge graph or taxonomy. But you may want to write your own crawler from scratch rather than relying on tools like Beautiful Soup. LLMs rich in structural information (such as xLLM) provide better results. Additionally, if your repository does lack any structure, you can extend your scraped data with structures retrieved from external sources. This process is called "structure augmentation".

4. How to enhance LLM embeddings with contextual tokens?

Embeddings are composed of tokens; these are the smallest text elements you can find in any document. You don't necessarily have to have two tokens, like "data" and "science", you can have four tokens: "data^science", "data", "science", and "data~science". The last one represents the discovery of the term “data science”. The first means that both "data" and "science" are found, but in random positions within a given paragraph, rather than in adjacent positions. Such tokens are called multi-tokens or contextual tokens. They provide some nice redundancy, but if you're not careful you can end up with huge embeddings. Solutions include clearing out useless tokens (keep the longest one) and using variable-size embeddings. Contextual content can help reduce LLM illusions.

5. How to implement self-tuning to eliminate many of the problems associated with model evaluation and training?

This applies to systems based on explainable AI, not neural networks black box. Allow the user of the application to select hyperparameters and mark the ones he likes. Use this information to find ideal hyperparameters and set them to default values. This is automated reinforcement learning based on user input. It also allows the user to select his favorite suit based on the desired results, making your application customizable. Within an LLM, performance can be further improved by allowing users to select specific sub-LLMs (e.g. based on search type or category). Adding a relevance score to each item in your output can also help fine-tune your system.

6. How to increase the speed of vector search by several orders of magnitude?

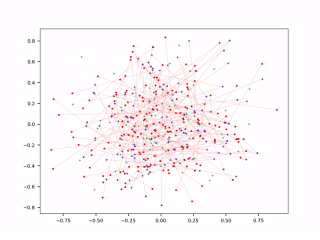

In LLM, using variable-length embeddings greatly reduces the size of embeddings. Therefore, it speeds up searches for similar backend embeddings to those captured in the frontend prompt. However, it may require a different type of database, such as key-value tables. Reducing the token size and embeddings table is another solution: in a trillion-token system, 95% of tokens will never be extracted to answer a prompt. They're just noise, so get rid of them. Using context tokens (see question 4) is another way to store information in a more compact way. Finally, approximate nearest neighbor (ANN) search is performed on the compressed embeddings. The probabilistic version (pANN) can run much faster, see figure below. Finally, use a caching mechanism to store the most frequently accessed embeddings or queries for better real-time performance.

Probabilistic Approximate Nearest Neighbor Search (pANN)

As a rule of thumb, reducing the size of the training set by 50% will give better results. The fitting effect will also be greatly reduced. In LLM, it's better to choose a few good input sources than to search the entire Internet. Having a dedicated LLM for each top-level category, rather than one size fits all, further reduces the number of embeddings: each tip targets a specific sub-LLM, rather than the entire database.

7. What is the ideal loss function to get the best results from your model?

The best solution is to use the model evaluation metric as the loss function. The reason why this is rarely done is that you need a loss function that can be updated very quickly every time a neuron is activated in the neural network. In the context of neural networks, another solution is to calculate the evaluation metric after each epoch and stay on the epoch-generated solution with the best evaluation score, rather than on the epoch-generated solution with the smallest loss.

I am currently working on a system where the evaluation metric and loss function are the same. Not based on neural networks. Initially, my evaluation metric was the multivariate Kolmogorov-Smirnov distance (KS). But without a lot of calculations, it is extremely difficult to perform atomic updates on KS on big data. This makes KS unsuitable as a loss function since you would need billions of atomic updates. But by changing the cumulative distribution function to a probability density function with millions of bins, I was able to come up with a good evaluation metric that also works as a loss function.

Original title: 7 Cool Technical GenAI & LLM Job Interview Questions, author: Vincent Granville

Link: https://www.datasciencecentral.com/7-cool-technical-genai-llm -job-interview-questions/.

To learn more about AIGC, please visit:

51CTO AI.x Community

https://www.51cto.com/ aigc/

The above is the detailed content of Seven Cool GenAI & LLM Technical Interview Questions. For more information, please follow other related articles on the PHP Chinese website!

2023年机器学习的十大概念和技术Apr 04, 2023 pm 12:30 PM

2023年机器学习的十大概念和技术Apr 04, 2023 pm 12:30 PM机器学习是一个不断发展的学科,一直在创造新的想法和技术。本文罗列了2023年机器学习的十大概念和技术。 本文罗列了2023年机器学习的十大概念和技术。2023年机器学习的十大概念和技术是一个教计算机从数据中学习的过程,无需明确的编程。机器学习是一个不断发展的学科,一直在创造新的想法和技术。为了保持领先,数据科学家应该关注其中一些网站,以跟上最新的发展。这将有助于了解机器学习中的技术如何在实践中使用,并为自己的业务或工作领域中的可能应用提供想法。2023年机器学习的十大概念和技术:1. 深度神经网

人工智能自动获取知识和技能,实现自我完善的过程是什么Aug 24, 2022 am 11:57 AM

人工智能自动获取知识和技能,实现自我完善的过程是什么Aug 24, 2022 am 11:57 AM实现自我完善的过程是“机器学习”。机器学习是人工智能核心,是使计算机具有智能的根本途径;它使计算机能模拟人的学习行为,自动地通过学习来获取知识和技能,不断改善性能,实现自我完善。机器学习主要研究三方面问题:1、学习机理,人类获取知识、技能和抽象概念的天赋能力;2、学习方法,对生物学习机理进行简化的基础上,用计算的方法进行再现;3、学习系统,能够在一定程度上实现机器学习的系统。

超参数优化比较之网格搜索、随机搜索和贝叶斯优化Apr 04, 2023 pm 12:05 PM

超参数优化比较之网格搜索、随机搜索和贝叶斯优化Apr 04, 2023 pm 12:05 PM本文将详细介绍用来提高机器学习效果的最常见的超参数优化方法。 译者 | 朱先忠审校 | 孙淑娟简介通常,在尝试改进机器学习模型时,人们首先想到的解决方案是添加更多的训练数据。额外的数据通常是有帮助(在某些情况下除外)的,但生成高质量的数据可能非常昂贵。通过使用现有数据获得最佳模型性能,超参数优化可以节省我们的时间和资源。顾名思义,超参数优化是为机器学习模型确定最佳超参数组合以满足优化函数(即,给定研究中的数据集,最大化模型的性能)的过程。换句话说,每个模型都会提供多个有关选项的调整“按钮

得益于OpenAI技术,微软必应的搜索流量超过谷歌Mar 31, 2023 pm 10:38 PM

得益于OpenAI技术,微软必应的搜索流量超过谷歌Mar 31, 2023 pm 10:38 PM截至3月20日的数据显示,自微软2月7日推出其人工智能版本以来,必应搜索引擎的页面访问量增加了15.8%,而Alphabet旗下的谷歌搜索引擎则下降了近1%。 3月23日消息,外媒报道称,分析公司Similarweb的数据显示,在整合了OpenAI的技术后,微软旗下的必应在页面访问量方面实现了更多的增长。截至3月20日的数据显示,自微软2月7日推出其人工智能版本以来,必应搜索引擎的页面访问量增加了15.8%,而Alphabet旗下的谷歌搜索引擎则下降了近1%。这些数据是微软在与谷歌争夺生

荣耀的人工智能助手叫什么名字Sep 06, 2022 pm 03:31 PM

荣耀的人工智能助手叫什么名字Sep 06, 2022 pm 03:31 PM荣耀的人工智能助手叫“YOYO”,也即悠悠;YOYO除了能够实现语音操控等基本功能之外,还拥有智慧视觉、智慧识屏、情景智能、智慧搜索等功能,可以在系统设置页面中的智慧助手里进行相关的设置。

人工智能在教育领域的应用主要有哪些Dec 14, 2020 pm 05:08 PM

人工智能在教育领域的应用主要有哪些Dec 14, 2020 pm 05:08 PM人工智能在教育领域的应用主要有个性化学习、虚拟导师、教育机器人和场景式教育。人工智能在教育领域的应用目前还处于早期探索阶段,但是潜力却是巨大的。

30行Python代码就可以调用ChatGPT API总结论文的主要内容Apr 04, 2023 pm 12:05 PM

30行Python代码就可以调用ChatGPT API总结论文的主要内容Apr 04, 2023 pm 12:05 PM阅读论文可以说是我们的日常工作之一,论文的数量太多,我们如何快速阅读归纳呢?自从ChatGPT出现以后,有很多阅读论文的服务可以使用。其实使用ChatGPT API非常简单,我们只用30行python代码就可以在本地搭建一个自己的应用。 阅读论文可以说是我们的日常工作之一,论文的数量太多,我们如何快速阅读归纳呢?自从ChatGPT出现以后,有很多阅读论文的服务可以使用。其实使用ChatGPT API非常简单,我们只用30行python代码就可以在本地搭建一个自己的应用。使用 Python 和 C

人工智能在生活中的应用有哪些Jul 20, 2022 pm 04:47 PM

人工智能在生活中的应用有哪些Jul 20, 2022 pm 04:47 PM人工智能在生活中的应用有:1、虚拟个人助理,使用者可通过声控、文字输入的方式,来完成一些日常生活的小事;2、语音评测,利用云计算技术,将自动口语评测服务放在云端,并开放API接口供客户远程使用;3、无人汽车,主要依靠车内的以计算机系统为主的智能驾驶仪来实现无人驾驶的目标;4、天气预测,通过手机GPRS系统,定位到用户所处的位置,在利用算法,对覆盖全国的雷达图进行数据分析并预测。

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

AI Hentai Generator

Generate AI Hentai for free.

Hot Article

Hot Tools

Zend Studio 13.0.1

Powerful PHP integrated development environment

SublimeText3 Chinese version

Chinese version, very easy to use

SublimeText3 Linux new version

SublimeText3 Linux latest version

Notepad++7.3.1

Easy-to-use and free code editor

Dreamweaver CS6

Visual web development tools