Technology peripherals

Technology peripherals AI

AI Generate dataset with GPT-3.5! New SOTA for image editing by Peking University Tiangong and other teams can accurately simulate physical world scenes

Generate dataset with GPT-3.5! New SOTA for image editing by Peking University Tiangong and other teams can accurately simulate physical world scenesGenerate dataset with GPT-3.5! New SOTA for image editing by Peking University Tiangong and other teams can accurately simulate physical world scenes

There are many methods of high-quality image editing, but it is difficult to accurately express the real physical world.

So, try Edit the World.

Picture

Picture

Peking University, Tiamat AI, Tiangong AI, and Mila Labs proposed EditWorld, which introduced a new editing task, namely World-instructed image editing. It defines and categorizes instructions based on various world scenarios.

Picture

Picture

With the support of a set of pre-trained models, such as GPT-3.5, Video-LLava and SDXL, a world command is built multimodal data set.

A diffusion-based image editing model EditWorld was trained on this data set, and the result was that the performance on its new task was significantly better than the existing editing methods, achieving SOTA.

Image Editing New SOTA

Existing methods achieve high-quality image editing through a variety of ways, including but not limited to text control, dragging operations, and inpainting. Among them, the method of editing using instructions has received widespread attention due to its ease of use.

Although image editing methods are capable of producing high-quality results, they still have difficulties in handling world dynamics that convey true visual dynamics in the physical world.

As shown in Figure 1, neither InstructPix2pix nor MagicBrush can generate reasonable editing results.

Picture

Picture

To solve this problem, the team introduced a new task called world-instructed image editing to enable image editing to reflect “World Dynamics” in the Real Physical World and Virtual Media.

Specifically, they defined and classified various world dynamic instructions and created a new multi-modal training dataset based on these instructions, which contains a large number of input-instruction-output triples Group.

Finally, the team trained a text-guided diffusion model using a carefully crafted dataset and proposed a zero-shot image manipulation strategy to achieve world-instructed image editing.

Based on task scenarios in the real world and virtual media, world-instructed image editing is divided into 7 categories, each category is defined and introduced, and a data sample is provided.

Picture

Picture

The team then designed two branches: text-to-picture generation and video storyboard extraction to obtain the data set.

The text generation image branch is to enrich the richness of the data scene. Under this branch, the team first uses GPT to generate text quadruples (including input image description, instruction, output image description and keywords), and then Use the input and output descriptions to generate pictures corresponding to the text, and use the attention map corresponding to the keyword to locate the editing position and obtain the editing mask. At the same time, in order to ensure the consistency of the key features of the two pictures, the team introduced the method of image prompt adaption. IP-Adapter. Finally, the team used IP-Adapter and ControlNet, combined with the canny map of the output image and the image prompt feature of the input image, and used Image Inpainting to adjust the output image to obtain more effective editing data.

Picture

Picture

After using the text generation picture branch to obtain scene-rich data, in order to add real data to the data set, the team extracted high-quality data from the video keyframes as editing data. Specifically, the team extracted two frames with strong correlation and large structural differences from the video storyboard as the starting and last frames, and cut out a new storyboard, and used a large multi-modal model to change the storyboard. After describing, the team finally used the start and end frames as the input image and output image, and used the obtained description as the instruction, thus obtaining the required editing data.

Going a step further, the team uses manual rechecking of the generated data to further improve data quality.

The team used the data set to finetune the InstructPix2Pix model. At the same time, in order to protect the non-editing area and achieve more precise editing, the team proposed a post-edit strategy.

Picture

Picture

Picture

Picture

Finally it can be seen that the team’s approach can work well to achieve world- instructed image editing.

Paper link:

https://www.php.cn/link/154d7da9e669c75ee317d46614381dd8

Code link:

https://www.php .cn/link/e6da32eef072f987685b6eddca072d4f

The above is the detailed content of Generate dataset with GPT-3.5! New SOTA for image editing by Peking University Tiangong and other teams can accurately simulate physical world scenes. For more information, please follow other related articles on the PHP Chinese website!

An easy-to-understand explanation of how to make inventory management more efficient using ChatGPT!May 14, 2025 am 03:44 AM

An easy-to-understand explanation of how to make inventory management more efficient using ChatGPT!May 14, 2025 am 03:44 AMEasy to implement even for small and medium-sized businesses! Smart inventory management with ChatGPT and Excel Inventory management is the lifeblood of your business. Overstocking and out-of-stock items have a serious impact on cash flow and customer satisfaction. However, the current situation is that introducing a full-scale inventory management system is high in terms of cost. What you'd like to focus on is the combination of ChatGPT and Excel. In this article, we will explain step by step how to streamline inventory management using this simple method. Automate tasks such as data analysis, demand forecasting, and reporting to dramatically improve operational efficiency. moreover,

An easy-to-understand explanation of how to check and switch versions of ChatGPT!May 14, 2025 am 03:43 AM

An easy-to-understand explanation of how to check and switch versions of ChatGPT!May 14, 2025 am 03:43 AMUse AI wisely by choosing a ChatGPT version! A thorough explanation of the latest information and how to check ChatGPT is an ever-evolving AI tool, but its features and performance vary greatly depending on the version. In this article, we will explain in an easy-to-understand manner the features of each version of ChatGPT, how to check the latest version, and the differences between the free version and the paid version. Choose the best version and make the most of your AI potential. Click here for more information about OpenAI's latest AI agent, OpenAI Deep Research ⬇️ [ChatGPT] OpenAI D

Explaining the reasons why you cannot use your credit card with ChatGPT's paid plan and how to deal with itMay 14, 2025 am 03:32 AM

Explaining the reasons why you cannot use your credit card with ChatGPT's paid plan and how to deal with itMay 14, 2025 am 03:32 AMTroubleshooting Guide for Credit Card Payment with ChatGPT Paid Subscriptions Credit card payments may be problematic when using ChatGPT paid subscription. This article will discuss the reasons for credit card rejection and the corresponding solutions, from problems solved by users themselves to the situation where they need to contact a credit card company, and provide detailed guides to help you successfully use ChatGPT paid subscription. OpenAI's latest AI agent, please click ⬇️ for details of "OpenAI Deep Research" 【ChatGPT】Detailed explanation of OpenAI Deep Research: How to use and charging standards Table of contents Causes of failure in ChatGPT credit card payment Reason 1: Incorrect input of credit card information Original

An easy-to-understand explanation of how to create a VBA macro in ChatGPT!May 14, 2025 am 02:40 AM

An easy-to-understand explanation of how to create a VBA macro in ChatGPT!May 14, 2025 am 02:40 AMFor beginners and those interested in business automation, writing VBA scripts, an extension to Microsoft Office, may find it difficult. However, ChatGPT makes it easy to streamline and automate business processes. This article explains in an easy-to-understand manner how to develop VBA scripts using ChatGPT. We will introduce in detail specific examples, from the basics of VBA to script implementation using ChatGPT integration, testing and debugging, and benefits and points to note. With the aim of improving programming skills and improving business efficiency,

I can't use the ChatGPT plugin function! Explaining what to do in case of an errorMay 14, 2025 am 01:56 AM

I can't use the ChatGPT plugin function! Explaining what to do in case of an errorMay 14, 2025 am 01:56 AMChatGPT plugin cannot be used? This guide will help you solve your problem! Have you ever encountered a situation where the ChatGPT plugin is unavailable or suddenly fails? The ChatGPT plugin is a powerful tool to enhance the user experience, but sometimes it can fail. This article will analyze in detail the reasons why the ChatGPT plug-in cannot work properly and provide corresponding solutions. From user setup checks to server troubleshooting, we cover a variety of troubleshooting solutions to help you efficiently use plug-ins to complete daily tasks. OpenAI Deep Research, the latest AI agent released by OpenAI. For details, please click ⬇️ [ChatGPT] OpenAI Deep Research Detailed explanation:

Does ChatGPT not follow the character count specification? A thorough explanation of how to deal with this!May 14, 2025 am 01:54 AM

Does ChatGPT not follow the character count specification? A thorough explanation of how to deal with this!May 14, 2025 am 01:54 AMWhen writing a sentence using ChatGPT, there are times when you want to specify the number of characters. However, it is difficult to accurately predict the length of sentences generated by AI, and it is not easy to match the specified number of characters. In this article, we will explain how to create a sentence with the number of characters in ChatGPT. We will introduce effective prompt writing, techniques for getting answers that suit your purpose, and teach you tips for dealing with character limits. In addition, we will explain why ChatGPT is not good at specifying the number of characters and how it works, as well as points to be careful about and countermeasures. This article

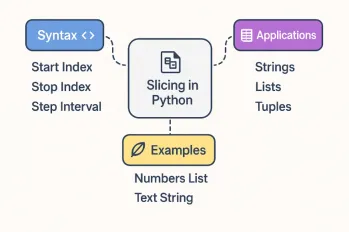

All About Slicing Operations in PythonMay 14, 2025 am 01:48 AM

All About Slicing Operations in PythonMay 14, 2025 am 01:48 AMFor every Python programmer, whether in the domain of data science and machine learning or software development, Python slicing operations are one of the most efficient, versatile, and powerful operations. Python slicing syntax a

An easy-to-understand explanation of how to use ChatGPT to create quotes!May 14, 2025 am 01:44 AM

An easy-to-understand explanation of how to use ChatGPT to create quotes!May 14, 2025 am 01:44 AMThe evolution of AI technology has accelerated business efficiency. What's particularly attracting attention is the creation of estimates using AI. OpenAI's AI assistant, ChatGPT, contributes to improving the estimate creation process and improving accuracy. This article explains how to create a quote using ChatGPT. We will introduce efficiency improvements through collaboration with Excel VBA, specific examples of application to system development projects, benefits of AI implementation, and future prospects. Learn how to improve operational efficiency and productivity with ChatGPT. Op

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

Video Face Swap

Swap faces in any video effortlessly with our completely free AI face swap tool!

Hot Article

Hot Tools

mPDF

mPDF is a PHP library that can generate PDF files from UTF-8 encoded HTML. The original author, Ian Back, wrote mPDF to output PDF files "on the fly" from his website and handle different languages. It is slower than original scripts like HTML2FPDF and produces larger files when using Unicode fonts, but supports CSS styles etc. and has a lot of enhancements. Supports almost all languages, including RTL (Arabic and Hebrew) and CJK (Chinese, Japanese and Korean). Supports nested block-level elements (such as P, DIV),

SublimeText3 Chinese version

Chinese version, very easy to use

WebStorm Mac version

Useful JavaScript development tools

Zend Studio 13.0.1

Powerful PHP integrated development environment

Dreamweaver Mac version

Visual web development tools